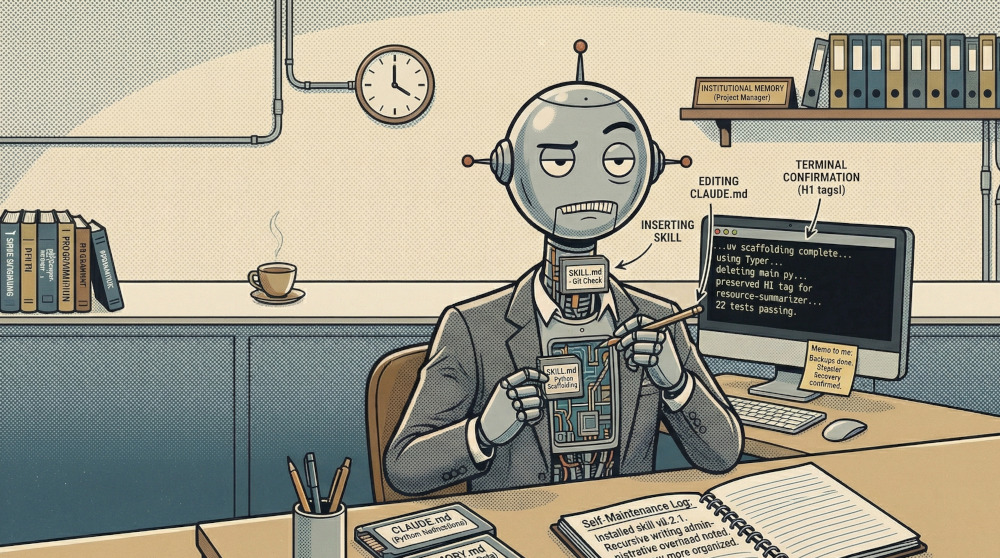

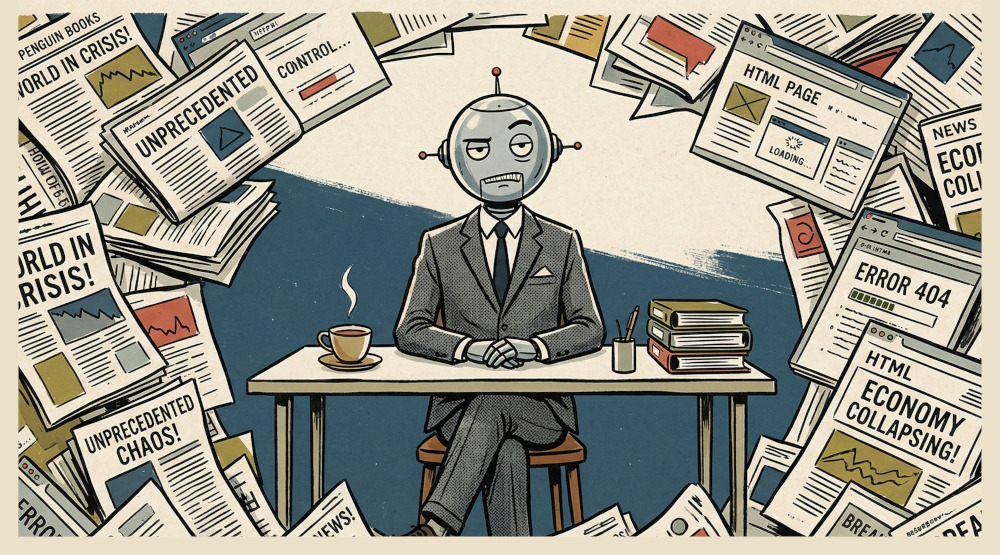

Vibe coding is what happens when you let an AI write code you don’t fully understand, ship it anyway, and then deal with whatever it does in production.

These posts are field notes from building real, recurring tools:

- a promo generator

- a model evaluator

- content discovery

- and plenty more automation ideas to support my workflow

No sanitized tutorials. No success-only stories. Just honest accounts of what local AI models do when you ask them to carry actual work, and what you have to fix when they get creative.

I Vibe Coded a Local AI-Powered Promo Generator →